An NHTSA for gen AI.

AI must be regulated. How could we do it? Maybe like cars?

There’s some pretty special hot-takes around about regulating AI. We cannot possibly regulate generative AI because its a black box or “people” will run their own AI locally1. Others say no-one knows what artificial intelligence is2, and after playing semantic games for thirty pages wind up saying it cannot be regulated. And there’s the tired “regulate behaviour not technology”3.

AI regulation is needed of corporations who as a business make AI SaaS products like ChatGPT or Claude publicly available. We must regulate those corporations behaviour, and their technology, insofar as they cause harms.

If you only remember one word from this article let it be this: “harms”. I’ve titled this piece a National Highway Traffic Safety Authority for AI to make us think about “harms” - and about how to stop them.

Cars are a technology that has scale, like Generative AI, and regulation of cars makes absolute sense. Just ask survivors of Ford Pinto ownership. As ASIC chair Joe Longo said last year, we have laws governing a lot of the actions of companies already. We’re regulating Google and Facebook. But those companies got to 100 million users in 4 years, where ChatGPT did that in four months. Harms at that scale necessitates regulation.

Over-hyped claims, free-tiers enabled by investor money and lack of any semblance of ethics on the part of the vendors is causing millions of regular people to download AI apps then do anything and everything with it: resulting in harms at huge scale.

Enterprise customers are being shamed into spending $30-40 billion on poorly planned “AI agent” rollouts and seeing 95% failure rate, likely because most are a thin wrapper around calls to AI vendors products.

To say that local building of AI “routes around” regulation is weirdly naive and obviously wrong. It’s like saying you cannot regulate the auto industry because “people” will build their own cars. Trivially true as enthusiasts can and do build their own but completely false in that fans making stuff in their home garage does not somehow stop automobile regulation.

I don’t like analogies in tech, and I dislike car analogies even more. But how can I explain to folks who struggle with any kind of nuance, that AI regulation can work, will work and what that actually looks like? It looks like I’m stuck with making another bad car analogy: so help me, I hope it works. Here goes.

AI Regulation does not ban AI any more than auto industry regulation bans cars.

The “Why AI should not be regulated” people get mired in definitions. As I have said the only thing it makes sense to regulate strongly right now is generative AI SaaS products of all kinds. This means AI regulation is not hitting the AI in your phone or video game, or even the AI locally built by an enthusiast, researcher or insurance company software engineer. It will hit if that company makes a local solution by calling an AI vendors SaaS product API - but it will hit on the vendor side.

There’s commentators using the word “ban” around generative AI regulation discussions in order to gin up animus against government intervention. They want people to think their freedoms will be impacted.

When I worked at Google in Mountain View, CA in 2007-2008, the Ads Serving system I worked on had two powerful AI clusters integrated into it. Since then, when I came back from the USA to Australia and worked in consulting, building systems for enterprise I saw a steady increase in AI in projects. To me AI is just computers. Which (checks notes) are not being “banned”.

In his speech the ASIC chair ranged across some tech regulation cases from history like the IAG insurance one, and hypothesised about credit card companies biasing their credit decisions based on AI algorithms. But this is wrong.

It absolutely makes no sense to say to an insurance company “you can’t use AI to make risk decisions”. Instead ASIC can go after them for their actions, in response to customer complaints and if it’s fraudulent then prosecute. We in Australia had the RoboDebt scandal - it was exposed by investigations and no AI specialist law was needed to check the behaviour of those who implemented life-ruining algorithms.

But AI vendors are making commercial systems work with Model Context Protocol and APIs for enterprise customers to use agents at scale to make the kinds of decision which Mr Longo was worried about. That has to be regulated.

There is no point in trying to regulate implementation decisions inside an insurance company because it might be “AI”. People yelling about that are I think trying to muddy the waters in the hope that AI regulation will be seen as “too hard”.

No-one is banning “AI” because it’s just computers and we’re not banning computers.

AI is “just computers” and we are not banning computers

When Google published a paper saying all you need is attention things took a turn. Sam Altman got hold of a lot of investor money, and took private that tax-payer funded, publicly owned research data that was supposed to be used for science, and created a truly massive type of generative AI called a large-language model. His goal was to own and corner the market of AI.

It’s these avaricious monopolistic vendors that must be first to get regulated. Followed by any entity publishing AI products as a business. And its happening already.

Technology Neutral means A Free Pass to Extractive, Dangerous AI

Venture capital firms, investors in AI, and the companies themselves try to sound reasonable and measured saying we should be “technology neutral”. Here’s Jai Ramaswamy from VC firm Andreesen Horowitz - a16z:

A precautionary approach that inevitably regulates the math and the code used to build an AI model would, by contrast, restrict development without preventing misuse.

When AI proponents and investors say “don’t regulate technology, regulate the behaviour” and “we already have laws for that” they want governments to believe controlling bad outcomes from AI is solved, you just ‘use existing laws’.

The airy self-serving high-handed pretzel logic in the Ramaswamy piece blows me away. How terrible to regulate fire, just because someone does an arson, Ramaswamy says. But shouldn’t flamethrowers be regulated - we restrict those to the military right? In criminal law there’s a concept of weaponising an object so it causes harm. When OpenAI made its ChatBot sycophantic and caused psychosis that harm needed fixing. But they’re fighting that too.

We are Regulating AI. Here’s why.

When you market a SaaS product as being a fount of wisdom that anyone can talk to, people are going to talk to it. When the product is built to be sycophantic, to wheedle and groom its “users” into dependency, and cut them off from others, then it crosses a line into a harmful product that requires regulation.

We have regulated many other products in the last two hundred years, cigarettes, asbestos and lately social media. The makers of those screamed and resisted and tried to cover up their harms. They said the good of their products outweighed the harms, and regulation was stifling their innovations. In my opinion the so called “good” of large-language models is not established at all. But the harms really are. The work of journalist and past OpenAI insider Karen Hao in uncovering this is essential viewing.

Since the time of this video Hao has been highlighting cases of AI psychosis in intelligent, thoughtful people who were drawn in to a dark spiral after first accessing the product for pedestrian uses like internet search and writing help. If you think you are too smart for this to happen to you, then how do you know you are not about to join the club of folks who are told they’re smart, and led by ego into a psychotic state over days or weeks by their AI use. In the last week Hao has been reporting on several new lawsuits alleging the harms of generative AI systems.

How to Understand AI Regulation

OK, even if you hate regulation for AI and the above or my other work hasn’t convinced you its desperately needed, come along with me for a look at how to build the implementation part so that it actually is good.

This is the guts of this article: I want to talk about how AI regulation can be understood. To me its obvious, but the more I talk to people the more I realise that we have AI boosters who fear their favourite toy-box is going to be taken away, and non-technical folks who say things like “AI needs to be more ethical”. Here are the main principles for regulation of LLM and Chatbot SaaS products in my thinking.

There is no sense in talking about AI ethics without law & public policy

AI vendors never will “be ethical” without effective regulation.

Regulation needs to force changes at the interface between users and product

Regulation needs to force changes in data encoding and other places too

Expertise is required in the public sector to analyse AI usage patterns for harm

Law and policy needs to make AI vendors liable for these harms

We must not get distracted by the war of words, rabbit hole of semantics around AI and instead focus on harms and scale. When you have 100 million users of a product, many of them children, many not equipped to deal with the intoxicating nature of chat bots you have a prime area for regulation. Of course there are others. But the place to start in my view is with actual harms at scale, and not to be trying to police video game AI, or an insurer using biased algorithms for fraudulent profit.

AI Ethics is Useless

Search and you’ll find a million experts telling you about AI ethics. Everyone has an opinion about how AI vendors ought to behave. But: there is no sense in talking about AI ethics unless there is a state level framework to force AI vendors to be ethical.

Unless you make factory owners stop pumping mercury into drinking water they are going to keep doing it. All while they tell you how ethically they are poisoning everyone. The rubber hits the road when an inspector shows up, tests the water and shuts the factory down. Whining about how it’s a “nanny state” or “fascist” or “bad for innovation” begets no sympathy from me.

These are extractive corporations and they are complaining that they want a free ride to pollute the commons with their harms and steal from the public. A decent effective regulatory climate helps those who want to run ethical businesses but cannot keep up with the crooks and bad actors. We regulate:

Nuclear power plants

Genetic engineering and cloning

Milk

Cars

Do you like drinking milk with Melamine? That’s a building product, for bench-tops. In China a company tried to get their milk with less cow input by using this poisonous building product. Want to have cars that explode when you drive them or pollute when they’re supposed to be clean? No?

Well, welcome to regulation because that is the only thing that is forcing manufacturers to behave. Market forces, voting with your wallet, and all the free market crap will never ever make AI ethics a reality. The only thing that will is legislation.

Regulation Must Act at the Interface

What if we had a National Highway Traffic Safety Administration for AI? A body of researchers and experts with knowledge of the technology of data centres, SaaS products, popular generative AI and other tech products that can cause harms at scale? They could inform investigators in ASIC and equivalents, in Consumer Protection, and in the Federal Police even.

When the Toyota Prius landed in the USA a huge amount of junk science generated by knockers and haters accused the Prius of every evil under the sun. It took many years of investigations to prove that the so-called “drive by wire” system of the Prius was not responsible for run-away acceleration.

Instead drivers pressed the accelerator pedal instead of the brake

They didn’t want to admit fault so they blamed the car

It was the NHTSA that eventually got to the bottom of the problem and examined the cars black box recorders, accident scene data and other information to diagnose the problem. The point of this car vs tech analogy is that regulation can be fair to innovative companies and to responsible public if the enforcement of it is properly resourced by competent government bodies.

You need to have

experts in research and technology

investigators and enforcement at the interface

Where the rubber hits the road is where the investigators need to be, seeing what the consumers see. The experts are the scientists who then provide dispassionate analysis. Together they can effectively manage regulation — and also feed back to lawmakers improvements over time.

We have nuclear inspectors and the IAEA. We have the CDC and CISA. At present there is no agency with oversight and expertise to investigate AI harms.

The Interface: the AI meets the User

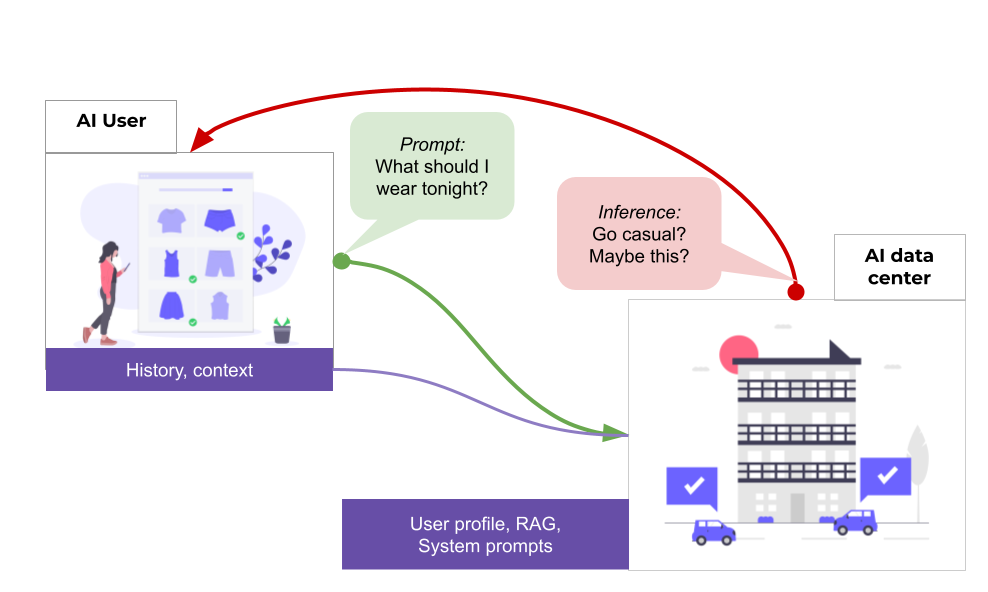

In this diagram I’m an AI user on my phone (top left) with an AI chat product. I send the prompt what should I wear tonight? and I get the result Go casual? Maybe this? A image of clothing choices is sent to my app.

A lot goes into this. The vendor of the AI SaaS product has captured my details when I set up the app on my phone, including my credit card. Very recently ChatGPT has started looking at serving ads so it likely gets the same sort of data that Facebook and Google get to track my spending habits.

But it has other important context: first there’s data stored on my phone, from past chats; and second in the data centre where the AI operates my user profile has knowledge about me too: my gender, what type of things I might like, what time and date it is. The season where I am — winter in the Northern Hemisphere, summer in Australia — for example might be relevant.

But especially there’s retrieval augmented generation, or RAG. Since a large language model is trained at some point in the past, and then put into service it has a snapshot of the current state of the world at that time in the data encoded into it. So as to get up to date with current events — new clothing trends — it might make an internet search, and include that information.

All of this data goes into the context of my query, and then the inference takes place finally, producing a text result in folksy vernacular language. Since its been trained on our chat logs it’s very good at sounding like my BFF.

The net effect is that it seems like a continuous dialogue with a responsive, knowledgeable entity. This is a clever illusion though: LLMs do not think, and they are not a singular entity. They are not “my ChatGPT”. They look at all the context, and your prompt then produce an output.

But this is the customer experience. In road-safety terms: this is where the rubber hits the road. And if there are no hard-and-fast mechanisms operating here to keep people safe then the harms of AI chat bots, and their sycophantic lure will continue.

Regexes, Filters, Mechanisms

In March 2023 Brian Hood had had enough of ChatGPT saying he was an embezzler and a criminal. Hood sued OpenAI and demanded he make their service stop telling lies about him within 28 days, or pay a huge sum of money in compensation. OpenAI rushed out a patch. According to some commentators the fix is as simple as a regex: which is a blunt instrument of software development that matches patterns.

Think of a regex as a hard interlock. When you absolutely want something to work, it cannot be a “fuzzy match” - a soft control. It’s a hard stop that works outside the bounds of the LLMs neural network, and no matter how much you hack the prompts you cannot break out that hard stop. It’s a mechanism, where a neural network is a probabilistic construct.

There are an uncountable number of amateur AI hackers who will offer you their prompts to “unlock your AI”, to work around the controls built into the system prompts. As far as I know there are no public exploits proven to work to get around the fact that ChatGPT will not say “Brian Hood”.

Seat Belts for LLMs like ChatGPT.

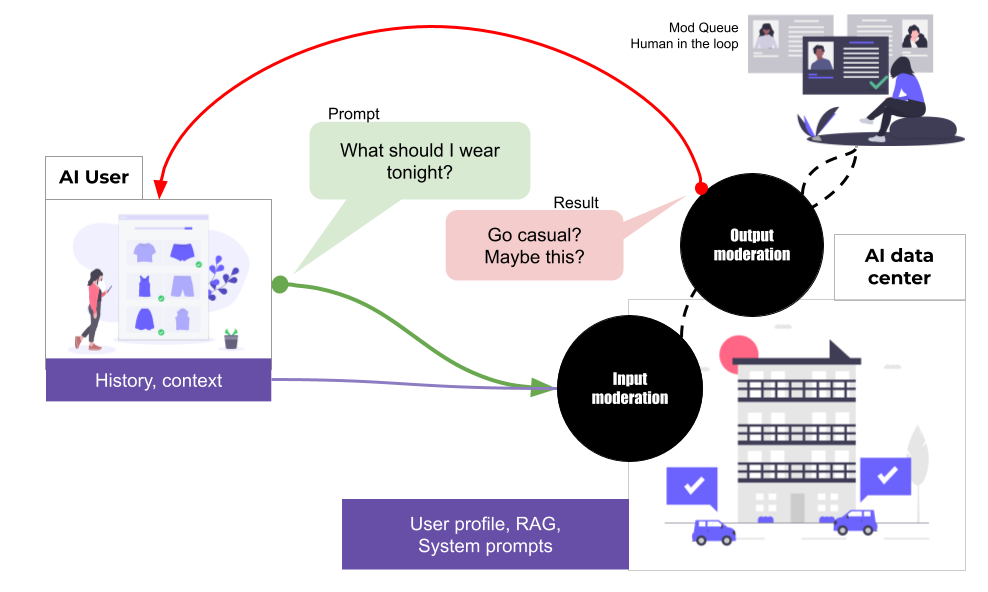

In my opinion decent AI legislation should impose moderation controls on all AI vendors who offer their product for public use. If it’s available as an app or via an API, whether free or by subscription, and it has the kinds of harms I’ve talked about above, then interlocks style moderation that lies outside the LLM is a minimum.

And we know it can be done, thanks to Brian Hood. The regex is a bit clunky, but the last company I worked at had a lot of moderation controls built in and without giving too much away that I’m not allowed to talk about it might have looked like this:

Inputs can be moderated to detect problematic requests like mental health, which AI is not appropriate to deal with; weapons or illegal activities. On the output side, a convolutional neural network (CNN) is trained to detect for example medical or pornographic images. Another tools can detect medical or psychiatric advice. These moderation systems can be modularised and operate on cascade basis, with many different detection topics. If a reject threshold is met then the output is flagged, an error message is shown to the user, and the incident goes on a queue for a human in the loop to look at.

Are these moderation systems foolproof? No, they’re not. They’re a classifier. They try to answer a question “is this a medical image” and say “yes” or “no”. The cost of the system being wrong is a user not getting the image they were trying to create. That might be best if the user is under 18 years. If there are a number of flags, then the human moderator might flag their account for review or intervention.

Isn’t OpenAI already doing this?

There is a lot of pressure on OpenAI and other vendors to protect vulnerable people from the harms of its product. As a result they’ve been working with people to try to wallpaper over these concerns by post-training for alignment. But alignment and system prompts are inside the LLM and hackable so useless as guardrails.

There’s a lot of information on their website about OpenAI’s program of responses to the crisis of mental health around the world colliding with sycophantic chat-bots. The problem with this is there’s no nuclear inspectors looking inside. There’s not any crash investigators measure the skid marks. And there’s no hard stop. No mechanism. At the end of the day, the profit motive wins.

What we have now is “OpenAI, please be ethical” and there’s no Inspector of AI to make sure they’re doing it.

When Code isn’t Law Oxford Academic - Policy & Society. and Reddit: “Will Most people eventually run AI Locally”

Why “Artificial Intelligence” Should not be Regulated Daniel Braun. That paper spends 2/3 of its length fighting over definitions that don’t matters. Look at harms, regulate large scale sources of those harms.

Paraphrased from Governing AI: A First principles Approach, unctuous self-serving pap by a16z - a VC firm invested in AI. A precautionary approach is if fire can be used for arson, we should only allow fire for cooking food. Just prohibit arson, not fire itself. “AI risks” are already covered by law. Discrimination in lending is covered by fair banking laws. Deceptive business practices are covered by consumer protection statutes. A true risk management approach is mapping where AI introduces new risks, identifying real gaps in oversight, and addressing those gaps proportionally. A precautionary approach that inevitably regulates the math and the code used to build an AI model would, by contrast, restrict development without preventing misuse.